Semantic compression

In natural language processing, semantic compression is a process of compacting a lexicon used to build a textual document (or a set of documents) by reducing language heterogeneity, while maintaing text semantics. As a result, the same ideas can be represented using smaller set of words.

Semantic compression is a lossy compression, that is some data is being discarded, and an original document cannot be reconstructed in a reverse process.

Contents |

Semantic compression by generalization

Semantic compression is basically achieved in two steps, using frequency dictionaries and semantic network:

- determining cumulated term frequencies to identify target lexicon,

- replacing less frequent terms with their hypernyms from target lexicon.[1]

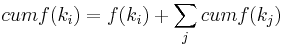

Step 1 requires assembling word frequencies and information on semantic relationships, specifically hyponymy. Moving upwards in word hierarchy, a cumulative concept frequency is calculating by adding a sum of hyponyms' frequencies to frequency of their hypernym:  where

where  is a hypernym of

is a hypernym of  . Then, a desired number of words with top cumulated frequencies are chosen to build a targed lexicon.

. Then, a desired number of words with top cumulated frequencies are chosen to build a targed lexicon.

In the second step, compression mapping rules are defined for the remaining words, in order to handle every occurrence of a less frequent hyponym as its hypernym in output text.

- Example

The below fragment of text has been processed by the semantic compression. Words in bold have been replaced by their hypernyms.

They are both nest building social insects, but paper wasps and honey bees organize their colonies in very different ways. In a new study, researchers report that despite their differences, these insects rely on the same network of genes to guide their social behavior.The study appears in the Proceedings of the Royal Society B: Biological Sciences. Honey bees and paper wasps are separated by more than 100 million years of evolution, and there are striking differences in how they divvy up the work of maintaining a colony.

The procedure outputs the following text:

They are both facility building insect, but insect and honey insects arrange their biological groups in very different structure. In a new study, researchers report that despite their difference of opinions, these insects act the same network of genes to steer their party demeanor. The study appears in the proceeding of the institution bacteria Biological Sciences. Honey insects and insect are separated by more than hundred million years of organic process, and there are impinging difference of opinions in how they divvy up the work of affirming a biological group.

Implicit semantic compression

A natural tendency to keep natural language expressions concise can be perceived as a form of implicit semantic compression, by omitting unmeaningful words or redundant meaningful words (especially to avoid pleonasms) .[2]

Applications and advantages

In vector space model, compacting a lexicon lead to a reduction of dimensionality, which results in less computational complexity and a positive influence on efficiency.

Semantic compression is advantageous in information retrieval tasks, improving their effectiveness (in terms of both precision and recall).[3] This is due to more precise descriptors (reduced effect of language diversity – limited language redundancy, a step towards controlled dictionary)

As in the example above, it is possible to display the output as natural text (re-applying inflexion, adding stop words).

See also

References

- ^ D. Ceglarek, K. Haniewicz, W. Rutkowski, Semantic Compression for Specialised Information Retrieval Systems, Advances in Intelligent Information and Database Systems, vol. 283, p. 111-121, 2010

- ^ N. N. Percova, On the types of semantic compression of text, COLING '82 Proceedings of the 9th Conference on Computational Linguistics, vol. 2, p. 229-231, 1982

- ^ D. Ceglarek, K. Haniewicz, W. Rutkowski, Quality of semantic compression in classification Proceedings of the 2nd International Conference on Computational Collective Intelligence: Technologies and Applications, vol. 1, p. 162-171, 2010